Activation functions are the building blocks of nearly every Machine Learning applications. There are a log and many are also surprisingly similar to the rectified Linear Unit (ReLU) activation function which is pretty derivative. While some are pretty unique, it’s hard to really know the difference or why one is better than the other. You would have to be an absolute geek about them to know the difference.

This is surprisingly similar to Disney Princesses. Some are unique but most are pretty derivative of each other. As an adult male who’s never been to Disney world or land, I confuse most of them with each other and figured it would be a perfect thing to reimagine as activation functions.

1. The Linear Activation Function as Cinderella

Cinderella is obviously the linear activation function. It’s pretty basic, prince charming, f(x) = x, evil step mother, symmetric around 0, talking animals. I think everyone agrees with this one. No controversy here. Cinderella’s a pretty one dimensional linear character and this activation function’s only really good for linear regression so there ya go.

2. Rectified Linear Unit (ReLU) as Sleeping Beauty/Aurora

Sleeping Beauty is obviously a ReLU. ReLU is basically the linear activation function function except it’s zero when x is negative, just like Aurora’s roll in the plot of her story…zero half of the time. ReLU is also a basic building block for a lot of deep learning applications like Sleeping Beauty is a pretty good source of princess topes being pretty useless until rescued by the prince and all. I don’t really know if Aurora takes up minimal computing power with sparse computations while training like the ReLU does but I’m definitely gonna tell my future kids that and I think you should too.

3. Sigmoid Activation Function as Pocohontas

Sigmoids are pretty natural, natural log that is. There’s not a Disney princess more natural than Pocohontas. She freakin talks to trees and whatever. She has the most animal friends, loves the outdoors, and probably doesn’t shave her armpits. Sigmoids are all about those natural logs, easily differentiable kind of like how they stole the plot for Pocohontas from James Cameron’s Avatar, pretty derivative, but still pretty unique compared to the other Disney princesses.

4. Leaky ReLU Activation Function as Snow White

If Sleeping Beauty is the ReLU, Snow White has to be the leaky ReLU. Still almost zero contribution to the plot for half of the story. They’re almost the same with an extremely small tweak. Dwarves and anything else? Now you get those negative values by multiplying x by 0.01 when negative. I honestly thought snow white was sleeping beauty when I started writing this, just like I can barely tell the difference between ReLU and leaky ReLU.

5. Binary Activation Function as C3PO

I don’t care about you purists out there, Disney owns Star Wars now, so C3P0 is finally a Disney Princess. I mean, the Binary activation function has to be a robot and it was in between this and EVE from WALL-E who isn’t nearly as much of a princess as C3P0. Anyway Binary is just a step function which can only really handle binary class problems on the output layer and C3P0 was only really good for translating, also one function.

6. Exponential Linear Unit (ELU) as Merida

A little natural log, a little derivative Disney princess, ELU’s gotta be Merida. She’s definitely got some princess tropes going on like all the ReLU activation functions but is way more self deterministic. That makes me think the ELU is a perfect fit since it doesn’t suffer from vanishing or exploding gradients or dying neurons like the other princesses. Higher accuracy and lower training times definitely goes to the one princess that can shoot straight.

7. Parametric Rectified Linear unit (PreLU) as Belle

I mean, Belle’s a pretty tropish Disney princess. But at least she reads so she can I guess she would have a trainable value on the negative side of her activation function. Going from that hot introvert turning down the one catch of her French town, Gaston, only to fall for a different beast, she’s definitely not differentiable. She still does homework, which you have to do a lot of to use different PreLU adjusted layers in a deep network effectively.

8. Swish Activation Function as Jasmine

Pet tiger? Stylish dresser? Self-deterministic? We have to choose the princess with the most sex appeal to have the sexiest activation function Swish. It’s like the ReLU like some of her princess tropes but it’s differentiable, trainable with a Beta parameter and damn, look at those curves. Swish even quickly converges to zero in negative space killing off negative nodes, just like Jasmine’s no-nonsense ruling style.

9. Soft Plus Activation Function as Ariel

Soft plus is a tough one. It’s also basically ReLU in shape. But it’s also much more natural. I’m sure I’m going to get a lot of negative emails over this choice because it’s pretty controversial, but it’s definitely Ariel. She’s got those ‘Oh please rescue me’ and ‘oh my gawd a boy’ Princess tropes but is also kinda natural log being half a fish and all. Sea shell bra? Come on! Total soft plus move.

10. Tanh Activation Function as Mulan

Tanh has one hell of a steep derivative. Something that would train with a derivative like that has got to go to the only Disney princess with a training montage. Mulan is also pretty unique as far as princesses go, just like Tanh. Not quite derivative of the other Disney princesses. Tanh is bounded -1 to 1 and not quite shaped like the ReLU. Good job for being special Tanh/Mulan go defeat those huns. Mulan and Tanh does have one weakness. They are both so non-linear that with the vanishing gradient problem, if you train either over a large number of epochs you may not realize SHE’S NOT A DUDE when that gradient vanishes.

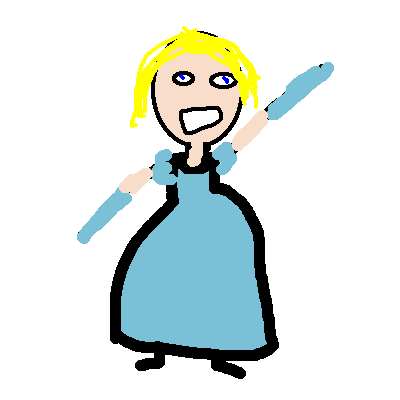

11. Soft Max Activation Function as Rapunzel

Rapunzel absolutely has to be soft max. Yeah, this was obviously going to be the hardest to do in MSPaint because the Soft Max is a multi-dimensional normalized exponential function so I imagined Rapunzel would be perfect. She’s basically a ditzy medusa with her blonde hair going every which direction trapping men and stuff. I don’t know if this is in the lore yet but I figure Rapunzel would definitely be the best at multi-class identification problems just like soft max compared to other Disney Princesses.

12. Mish Activation Function as Tiana

Tiana is a go with the flow natural log loving, jazz playing New Orleans princess. Yeah she falls into that whole obsessed with a prince trope most of the Disney Princesses have but lets be honest, she’s Disney’s first and only (I hope not for long) Cajun Princess which makes her quite unique. Tanh is basically like the other American sigmoid princess Pocahontas except just better with those larger derivatives. If you want the tastiest, most fun, freedom loving, jazzy part of America, take a weekend to NOLA. Don’t just go to the touristy bourbon street NOLA but the jazz bar speckled Frenchman street NOLA.

If you enjoyed this clickbait listicle please like, share, and subscribe with your email, our twitter handle (@JABDE6), our facebook group here, or the Journal of Immaterial Science Subreddit for weekly content.

If you REEEEALY love the content, for the equivalent price of a Chipotle Burrito, chips and Queso, you could buy our new book Et Al with over 20 hand picked Jabde articles for your reading pleasure, it’s the perfect Christmas/Birthday gift for confusing your unsuspecting family members! Order on amazon here: https://packt.link/at4bw Please rate and review so that you can brag to your friends about having opinions or showcase your excellent taste in reading material!